Common Terms in AI GPU Computing: Machine Learning, Deep Learning, Inferencing & Sensor Fusion

Artificial Intelligence - Transferring Human Abilities to Machines

Since the 1950s, artificial intelligence (AI) has been a widely discussed concept, experiencing highs and lows in popularity. However, since 2015, the global significance of AI has skyrocketed, largely due to the increased availability of graphics processors. Diederik Verkest of IMEC research center acknowledges this development.

It is difficult to set a clear boundary for AI, due to the lack of a definitive understanding of intelligence. In general, there is a distinction between strong AI and weak AI. Strong AI has the potential to do anything that a person can, but currently only exists in theory. Weak AI, on the other hand, is already used in various applications, where it can outperform or equal human abilities in certain areas.

With edge AI computers on the rise with the likes of NVIDIA Jetson we have created this guide to cover the basics of machine learning, deep learning, supervised and unsupervised learning, reinforcement learning, inferencing, perception, and sensor fusion.

Accelerating the Path to New Discoveries and Innovations

Compact Edge AI Inference Systems

OpenVINO / Coral AI Accelerators

Machine Learning - Pattern Recognition

Machine Learning is a branch of Artificial Intelligence that focuses on the autonomous construction of connections based on observed data. In essence, it is the act of gaining understanding through experience. The intricate math involved is complicated, but the process itself is simple. By providing a system with vast sets of information, it is able to learn to recognize objects such as traffic signs. It is not simply memorizing the examples, but rather, it is truly learning. Using machine learning, computers can detect patterns and trends in data that has been presented to them, and can then apply that knowledge to data that wasn’t previously seen in the training phase. This means that computers can learn without being explicitly programmed. However, the downside of this is that it can be hard to predict how the computer will behave, due to its lack of transparency and unpredictability. If the data used to train a machine learning algorithm is not an accurate representation of reality, then the machine’s conclusions will be flawed. Additionally, if it is presented with a situation that was not included in its training, the algorithm will be unable to respond properly. For instance, if a traffic sign is vandalized, the AI may not be able to recognize it.

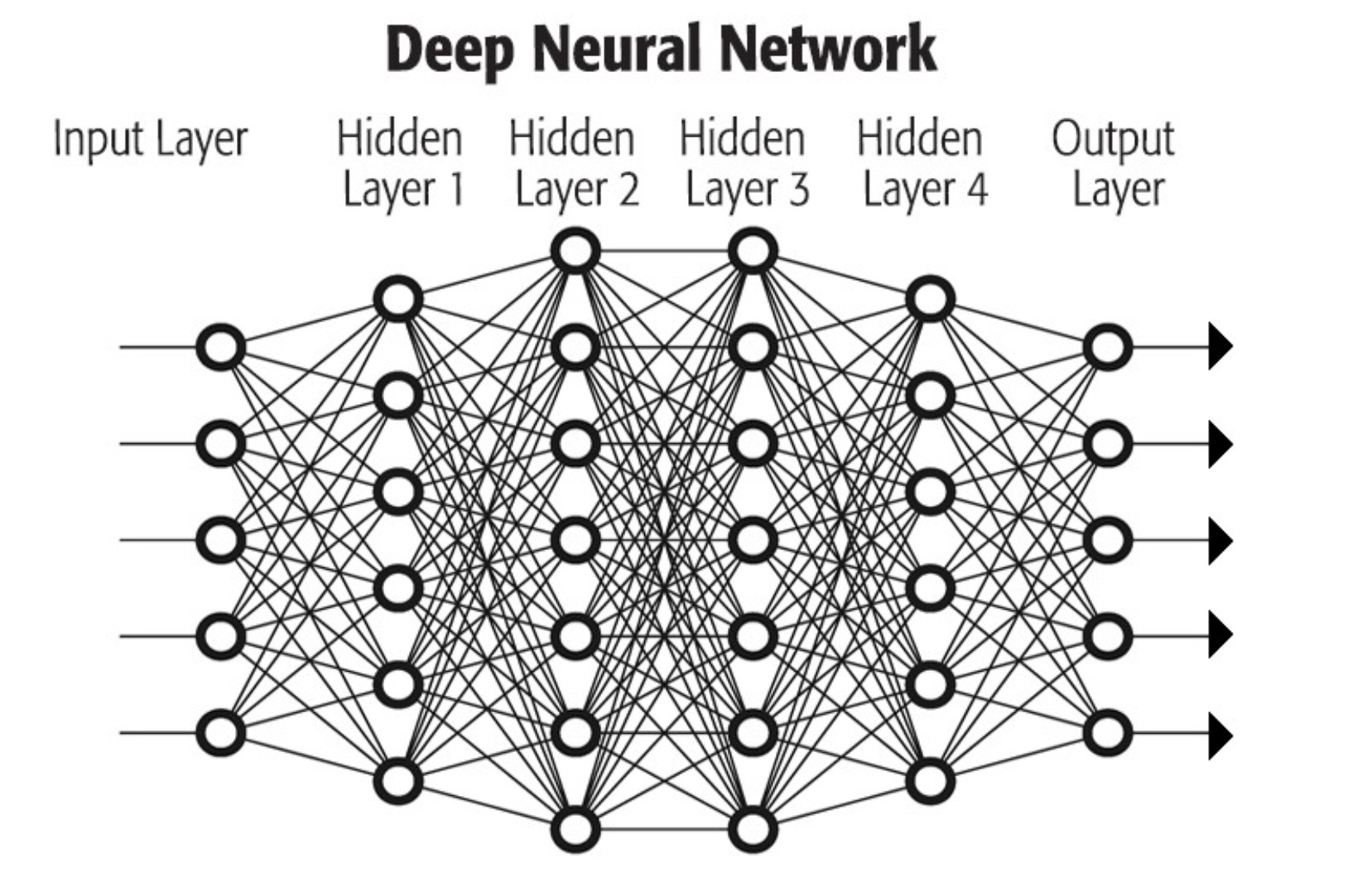

Deep Learning - Artificial Neural Networks

Deep learning is a form of artificial intelligence which uses neural networks to simulate the way the human brain works. This enables the computer to take in data, draw logical conclusions, and learn from its mistakes by continually making connections between previously learned data and new information.

Deep learning follows a similar process to how a baby would learn the concept of a “car”. To do this, the neural network is provided with a set of images which has been labeled as either containing a car or not. It is then able to use this information to figure out which images contain a car.

The neural network begins by detecting the luminosity of each pixel, and then progresses to noticing shapes made out of the pixels, such as horizontal and vertical lines. Eventually, the system can identify individual parts of a car, like the wheels and headlights.

Currently, deep learning can recognize any vehicle with wheels and headlights as a car. With reinforced deep learning, AI will be able to differentiate various types of vehicles, such as cars, motorcycles, trucks, and buses.

The example demonstrates how the more data that is processed by a neural network, the higher its capacity for thought and learning. The deeper the network becomes, with its multi-layered structure, the more precise its findings and knowledge.

Rather than relying on pre-existing models to recognize and classify data, deep learning algorithms are capable of creating their own models and adding new layers to their neural network. This eliminates the need to manually design and introduce new models for new events, which is necessary in traditional machine learning.

Deep learning has the capacity to be applied to a variety of areas, such as text, image, and facial recognition, as well as time series analysis for weather predictions or alert systems. These applications are divided into three distinct categories: supervised learning, unsupervised learning, and reinforcement learning.

Neural networks become more intelligent as more data is analyzed and more hidden layers are added to the system. Neural networks become increasingly intelligent and knowledgeable as more data is fed into them and more hidden layers are added to their structure.

Supervised and Unsupervised Learning

Supervised Learning

In supervised learning, information is labeled and categorized prior to being studied by the machine learning algorithms. This technique is utilized to, for instance, automatically categorize images. Manually assigning labels to the images, such as cars, trucks, and motorcycles, allows the algorithm to learn and recognize patterns. With enough repetition, the algorithm will be able to assign images to the appropriate category independently.

Data sets containing information on a variety of subjects are made open to everyone. An example is the MNIST database, which is composed of handwritten digits. It has 60,000 elements in the training set and 10,000 examples in the test set. This data can be employed to educate machine learning technologies to identify handwritten digits.

Unsupervised Learning

In unsupervised learning, no predetermined labels exist for the data being studied, so algorithmic training does not have any clear objectives. Such machine learning techniques are beneficial for large data analysis and are mainly used in cybersecurity. Despite this, unsupervised learning is not widely applied yet.

Reinforcement Learning

Reinforcement learning is a technique in which an agent is trained to reach a desired goal by trial and error, with rewards and punishments used as guidance. This type of learning is exemplified in the development of computer chess programs, which can determine the effectiveness of a move or sequence of moves through simulations. AlphaGo, a DeepMind program for playing Go, is a combination of supervised and reinforcement learning.

Inferencing

Inferencing is the process of deriving knowledge from existing data. AI algorithms learn from past experiences to draw conclusions and create new rules. This process can be divided into two types: inductive, which works in a forward direction, and deductive, which uses a backward direction. These two terms come from the field of logic.

Deductive Inferencing

Deductive inferencing is a process in which a conclusion is reached by combining and examining existing rules and conditions. This method of reasoning is highly accurate but does not allow for the discovery of new information.

Using deductive reasoning, one can move from a general truth to a specific illustration; for example, if it is established that all fish live in water, and it is then known that a particular fish, such as Nemo, is a fish, it can be concluded that Nemo also lives in water.

Inductive Inferencing

Rather than drawing individual conclusions from general principles, inductive inferencing works by forming broader generalizations from specific examples. This method can yield valuable insights, but may not always be accurate due to its origins in individual cases.

The conclusion that Nemo lives in water is derived from the condition that Nemo is a fish, with the logical rule that all fish live in water being inferred from it.

Perception and Sensor Fusion

Sensor fusion is a process of collecting data from various sensors, such as lidar, radar, ultrasound, microphones, and cameras, and combining it to create a comprehensive view of the environment. This data is then processed using AI algorithms and parallel processor technology, enabling the AI to make intelligent decisions in real time.

Perception is commonly employed to enable vehicles to operate either with partial or total self-governance, or with sophisticated driver-supportive features.

Have AI Code Ready to Deploy on an Industrial AI PC?

Tell us about your application and a member of our team will get right back to you.